| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | ||||

| 4 | 5 | 6 | 7 | 8 | 9 | 10 |

| 11 | 12 | 13 | 14 | 15 | 16 | 17 |

| 18 | 19 | 20 | 21 | 22 | 23 | 24 |

| 25 | 26 | 27 | 28 | 29 | 30 | 31 |

Tags

- Meta AI

- iclr 2024

- Computer Vision

- Multi-modal

- 논문 리뷰

- Stable Diffusion

- Segment Anything

- active learning

- iclr spotlight

- 논문리뷰

- Segment Anything 리뷰

- cvpr 논문 리뷰

- contrastive learning

- Self-supervised learning

- 자기지도학습

- cvpr 2024

- CVPR

- Data-centric AI

- ai 최신 논문

- Segment Anything 설명

- ICLR

- Data-centric

- Prompt란

- ssl

- deep learning

- Prompt Tuning

- Computer Vision 논문 리뷰

- deep learning 논문 리뷰

- VLM

- iclr 논문 리뷰

Archives

- Today

- Total

목록HQ-SAM 논문 설명 (1)

Study With Inha

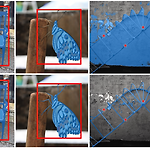

[Paper Review] 고해상도 결과를 얻을 수 있는 Segment Anything 후속 연구, HQ-SAM 논문 리뷰

[Paper Review] 고해상도 결과를 얻을 수 있는 Segment Anything 후속 연구, HQ-SAM 논문 리뷰

Segment Anything in High Quality, ETH Zurich 논문링크: https://arxiv.org/abs/2306.01567 Segment Anything in High QualityThe recent Segment Anything Model (SAM) represents a big leap in scaling up segmentation models, allowing for powerful zero-shot capabilities and flexible prompting. Despite being trained with 1.1 billion masks, SAM's mask prediction quality falls short inarxiv.org Introduction올해 상..

Paper Review

2023. 7. 27. 12:17