| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | ||

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| 13 | 14 | 15 | 16 | 17 | 18 | 19 |

| 20 | 21 | 22 | 23 | 24 | 25 | 26 |

| 27 | 28 | 29 | 30 |

- Multi-modal

- Segment Anything

- Data-centric

- Prompt란

- iclr spotlight

- 논문리뷰

- deep learning 논문 리뷰

- ssl

- cvpr 논문 리뷰

- active learning

- cvpr 2024

- iclr 2024

- Segment Anything 설명

- deep learning

- 자기지도학습

- 논문 리뷰

- Prompt Tuning

- Segment Anything 리뷰

- Data-centric AI

- ICLR

- Self-supervised learning

- Computer Vision

- Stable Diffusion

- CVPR

- ai 최신 논문

- Computer Vision 논문 리뷰

- iclr 논문 리뷰

- Meta AI

- VLM

- contrastive learning

- Today

- Total

목록LLM (2)

Study With Inha

[Paper Review] NeurIPS 2023, StableRep: Synthetic Images from Text-to-Image Models Make Strong Visual Representation Learners 논문 리뷰

[Paper Review] NeurIPS 2023, StableRep: Synthetic Images from Text-to-Image Models Make Strong Visual Representation Learners 논문 리뷰

Google Research, NeurIPS 2023 acceptedStableRep: Synthetic Images from Text-to-Image ModelsMake Strong Visual Representation Learners논문 링크: https://arxiv.org/pdf/2306.00984.pdf StableRep은 NeurIPS 2023에 accept된 논문으로 LG AI Research에서 정리한 NeurIPS 2023 주요 연구주제에 선정된 논문이다.LG AI 리서치 블로그: https://www.lgresearch.ai/blog/view?seq=379 [NeurIPS 2023] 주요 연구 주제와 주목할 만한 논문 소개 - LG AI Research BLOGNeurIPS 2023,..

[Paper Review] (BLIP, BLIP-2) Bootstrapping Language-Image Pre-training 설명 및 논문 리뷰

[Paper Review] (BLIP, BLIP-2) Bootstrapping Language-Image Pre-training 설명 및 논문 리뷰

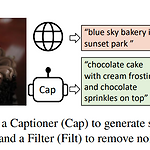

BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation 논문 링크: https://arxiv.org/abs/2201.12086 BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation Vision-Language Pre-training (VLP) has advanced the performance for many vision-language tasks. However, most existing pre-trained models only excel..